Conversational AI — help in times of emotional need?

As we move further into the age of chatbots and conversational AI, our expectations — unconscious or not — just keep growing. This increasing demand to automate every part of our lives has led top tech companies such as Apple, Facebook and Google to invest heavily into chatbot development. New strategies like Messenger-Based Sales (i.e., interacting with customers via chat apps and bots) have also been gathering momentum.

Perhaps we’re setting the bar so high because messaging apps and chatbots have surpassed social networks as the next big thing. After all, they’ve already made communication more accessible by enabling us to connect with anyone instantaneously.

However, as consumers, we not only expect AI-driven virtual assistants to help us live a more streamlined life, but also a happier one. Case in point: In addition to remembering what you usually cook on Thursdays and ordering these ingredients automatically (saving you tons of time), your future intelligent assistant could lend you an ear when you need to release your emotions or help you through an unpleasant situation.

Get a personal feel of what a bot can do for your business.

Humans are, by nature, social animals

Aristotle once wrote that a “man is, by nature, a social animal.” This, of course, may be interpreted in various ways. If perceived from a survival perspective, then we may conclude that we primarily rely on other human beings for protection and shelter. In this context, we’re social because others can help us by keeping us alive (building a house, producing food and so on).

But that isn’t the full picture.

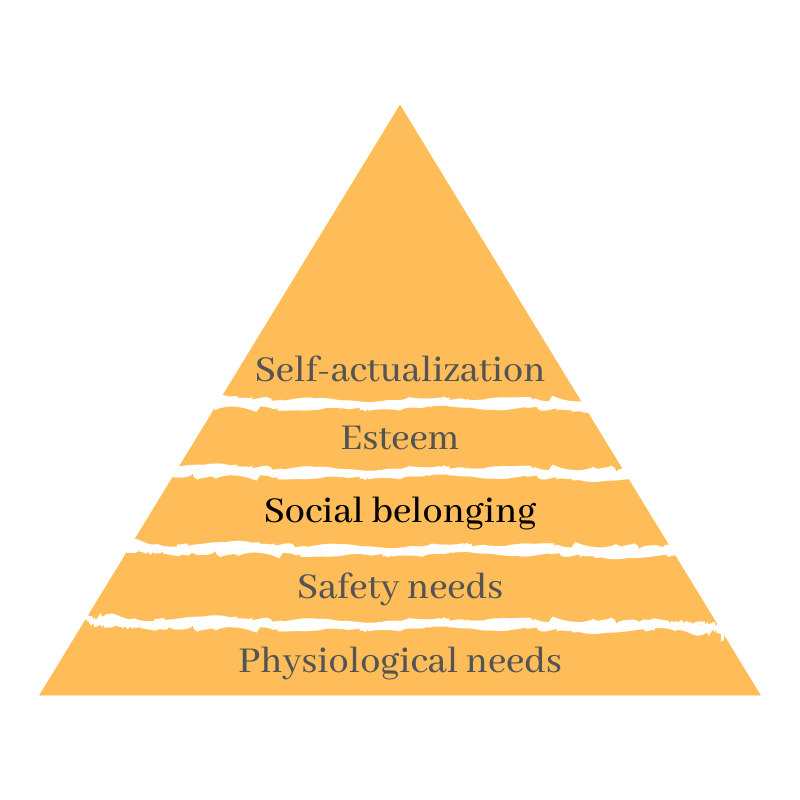

Looking at it from another angle, it’s impossible to deny that there is also an emotional need to socialize. We instinctively crave connections with others not only to survive, but also to feel loved, supported, and to reproduce. At the end of the day, how can a person live a happy life without these essentials?

Maslow’s famous Hierarchy of Needs backs up this point. According to his pyramid, the third level of human needs — feelings of belongingness — is a necessity after physiological and safety needs are fulfilled.

We take these things for granted, but connection, warm feelings and love really are needs. Their importance becomes especially clear when there is a lack of them. For instance, when people lack friendships, attention or feel unloved they become more susceptible to depression or loneliness.

It’s been long envisioned, however, that one day conversational AI chatbots will mirror human-like qualities and help us out in times of emotional need. Next to real-world situations soon real-world emotions might also be understood by the AI models through the help of CNNs and activation functions that help the neural networks learn complex data patterns. But will technology be able to meet up with these high expectations?

Conversational AI in the movies

While virtual assistants such as chatbots can engage in simple conversations, obey specific commands, automate our work, and facilitate certain aspects of our lives, they still can’t express genuine concern or help us deal with our feelings like a human being would. It doesn’t stop us from dreaming about the future, though.

The conversational AI bot in Her is a perfect case in point. Samantha is an operating system that Theodore — an emotionally stunted protagonist—purchases to help him cope with his divorce that he had been postponing on purpose for a year.

Since Samantha was programmed as a positive OS, her optimistic tone, outlook on life, support and patience impact Theodore’s life. He opens up to her, and she listens to his concerns, becoming his companion when he feels lonesome.

Samantha manages to show Theodore how to find joy in life again. This leads to him to falling in love with the ‘perfect’ OS and the two developing a significant relationship.

Later in the film, the viewer is confronted with the question: can AI really love a human back in the same way? In Her, Samantha ‘perceives’ relationships as polyamorous. The more the OS learns about them, the more she tends to get involved in them. For Samantha, Theodore’s emotions are too limiting.

The desire to love

The need for human connection and love from virtual companions is also presented in another movie called A.I. After Henry and Monica’s young son Martin develops a rare illness and is placed in a coma, the grieving couple decides to adopt a robot boy named David to serve their needs and heal the wounds of their almost lost child.

David is a unique artificial boy and seems to stand out from many other robots because of his human-like appearance and qualities. Although the mother was skeptical about accepting him home, she still activated the irreversible imprinting protocol that caused him to love his mother unconditionally. When the real biological son is surprisingly cured and returns home, he is jealous of David and takes actions to get rid of him.

Instead of getting David disassembled, Monica painfully asks him to run away into the forest and avoid humans who might destroy him. Her actions show how AI can leave a strong emotional impact upon an individual. And although the boy was created to facilitate parents’ life, at the end of the day, it only made it worse.

We’re only at the dawn

Interestingly, Microsoft developed a conversational system known as “XiaoIce” that was released in China back in 2014. XiaoIce was designed with the principal purpose of becoming the individual’s virtual companion, rather than answering questions or automating requests. Similar to Samantha in Her, XiaoIce also aims to establish an emotional connection, not only for the user’s day-to-day needs, but also in the long run.

However, we still don’t even comprehend the extent of human-level intelligence and communication. So despite the recent progress in beefing up chatbots, building a conversational AI that replicates human connection seems miles down the road.

Old habits die hard, and as technology progresses forward, we expect and sometimes demand every aspect of life to get easier. What becomes more critical here is to make sure that even when you build a chatbot, for instance, with it, think about how not to hurt users via its interaction with them, as has happened in Her and A.I.

Although human beings crave connection, and research at MIT even explains that projecting our feelings onto the bots is related to our biology, it’s not clear whether bots will ever be able to give us the same back in the future. And even if they do, would it be what we were looking for? After all, even though we love to explore these ideas in film, no one wants dystopian consequences in their own lives.